Demo

Interactive demos and experiments. The current feature is a RAG assistant that answers questions about my work and returns sources.

This demo currently runs on CPU, so responses can be slower than a GPU-backed setup. I’m prioritizing GPU resources for experiments/training and research workflows. When spare GPU capacity is available, I’ll enable faster GPU demos again.

Tip: short questions feel much faster on CPU.

Retrieval-Augmented Generation (RAG): we retrieve relevant notes, then the model writes an answer grounded in those sources.

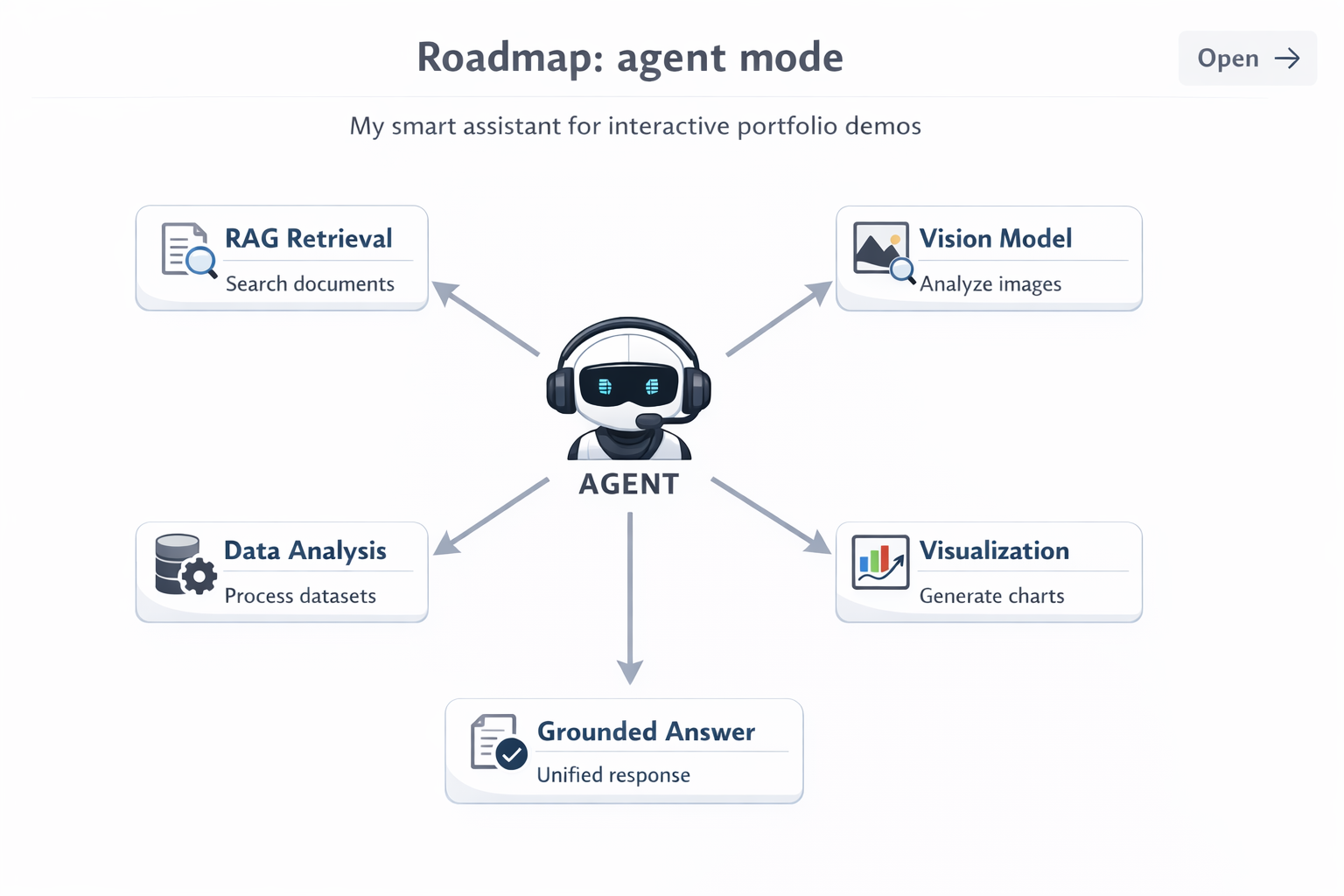

This demo will evolve from “answer questions” to “run my workflow.” Instead of one generic chatbot, an agent will route each request to the right capability: retrieve context from my notes (RAG), run my vision models (e.g., segmentation/detection/embedding search), or run analysis + generate visuals (plots, metrics, evaluation slices).

Then it will combine outputs into one clear, grounded explanation—so visitors can see how I actually work across remote sensing, medical imaging, and data science through a single interface.

- Tool routingDecide whether to retrieve notes, run a vision model, or generate a plot.

- Bounded stepsLimits + timeouts keep it stable (especially on CPU).

- Grounded answersCite sources or say “not sure” when info isn’t available.